Your Executive Survival Guide to The AI Regulation Tsunami

Mar 02, 2026For the past several years, I have been privilege to guide business leaders to realize the transformational power of artificial intelligence (AI). Through my consulting experience, and through this Substack, I have shared many ways AI can help organizations run more efficiently, innovate, and make their organizations more competitive. But with great power comes great responsibility, and, as of 2025, that responsibility is becoming increasingly codified in law.

AI regulations are no longer a distant threat. As business leaders you need to be aware that AI regulations are evolving quickly across jurisdictions and they require some upfront strategies from executives. Failure to comply with AI regulations could result in fines, reputational harm, or even operational shutdowns.

In this article, I will discuss global regulations, a practical compliance framework for the organization, risk assessment and mitigation strategies. Let’s make sure that you are prepared before it is too late.

A Global Perspective on the Regulatory Landscape

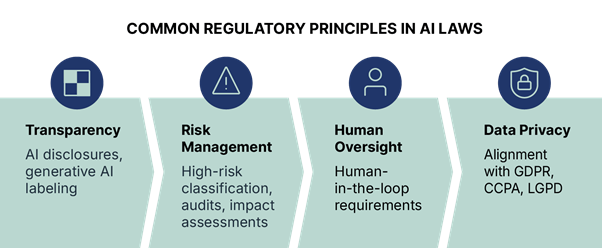

The AI regulatory environment in 2025 is a patchwork of approaches, reflecting diverse priorities from innovation-driven deregulation to stringent ethical safeguards and a little bit of panicking over the rapid growth of AI technologies.

Globally, legislative mentions of AI have surged 21.3% since 2023 across 75 countries, representing a ninefold increase since 2016. This acceleration underscores the urgency for executives to stay informed.

In the European Union, the AI Act has fully entered into force, with rules for general-purpose AI models applying from August 2025. It categorizes AI systems by risk levels, prohibiting high-risk applications like social scoring while mandating transparency and human oversight for others. Draft guidelines from July 2025 clarify provisions for startups and SMEs, emphasizing innovation support alongside compliance.

Across the Atlantic, the United States under the Trump administration has pivoted toward minimal bureaucratic interference. The America’s AI Action Plan, released in July 2025, outlines over 90 federal policies aimed at bolstering private sector leadership without heavy regulation. This contrasts with earlier Biden-era executive orders, focusing instead on voluntary standards and export controls to maintain global competitiveness.

China is advancing its “AI Plus” initiative, with an August 2025 guideline targeting high penetration rates for AI in key sectors. New labelling rules effective September 2025 require AI-generated content, such as chatbots and synthetic media, to be marked visibly. At the 2025 World AI Conference, Premier Li Qiang announced a 13-point global governance plan, promoting international coordination on safety and ethics.

The United Kingdom maintains a pro-innovation stance, with plans for targeted legislation in 2025 to address high-risk AI while avoiding broad mandates. A recent blueprint aims to integrate AI into public services like planning and healthcare, balancing growth with trust.

In Canada, the AI Strategy for the Federal Public Service (2025-2027) establishes governance for responsible AI use, focusing on transparency and risk management. A September 2025 task force and public consultation are shaping the next national strategy, emphasizing ethical, green AI to differentiate from U.S. dominance.

Other regions, like Japan and Latin America, are drafting frameworks that mix EU-style risk assessments with local priorities. Overall, while the EU and China lean prescriptive, the U.S. and UK are more in favour of flexibility, a wise approach but an approach that creates challenges for multinational firms. Do you remember GDPR?

Practical Compliance Framework for Upcoming Regulations

Compliance isn’t just about avoiding penalties. The goal is to build resilient AI operations. Drawing from established frameworks like NIST’s AI Risk Management Framework (RMF), executives can implement a step-by-step approach.

-

Establish AI Governance Structures: Form a cross-functional team including legal, IT, and ethics experts. Develop global policies that map to regulations like the EU AI Act’s risk classifications. For instance, document all AI systems, their data sources, and purposes.

-

Conduct Inventory and Classification: Audit existing AI tools and classify them by risk (e.g., low, high) per regional laws. In China, ensure labelling for generative AI; in the U.S., align with voluntary standards.

-

Implement Monitoring and Training: Invest in AI compliance tools for ongoing mapping and intelligence gathering. Roll out training programs to foster AI literacy, ensuring employees understand ethical use and reporting.

-

Update Policies and Contracts: Revise vendor agreements to include AI-specific clauses on data privacy and bias mitigation. For public sector ties, like in Canada, validate against OMB-like memos.

-

Engage in Multi-Line Defense: Adopt best practices like multiple oversight layers and regular audits to align with principles of fairness, transparency, and accountability.

This framework scales from startups to enterprises, turning compliance into a strategic asset.

Risk Assessment and Mitigation Strategies

AI risks, ranging from bias and privacy breaches to cybersecurity threats, can derail business objectives. Effective management starts with systematic identification.

Use NIST’s RMF to map, measure, and respond to risks, integrating AI into enterprise risk processes. Conduct regular assessments to evaluate deployment impacts, such as in fraud detection or decision-making.

Mitigation strategies include:

-

AI-Driven Tools for Prediction: Leverage AI itself for enhanced risk forecasting, like in fraud prevention or supply chain disruptions.

-

Robust Incident Response: Implement swift investigation protocols for AI-related issues, ensuring compliance with reporting requirements in regions like the EU.

-

Ethical Safeguards: Focus on bias reduction through diverse datasets and human-in-the-loop systems.

-

Transfer or Accept Risks: Use insurance for transferable risks and accept low-impact ones with monitoring.

By embedding these into corporate compliance, businesses can minimize liabilities while maximizing AI’s benefits.

Act Now or Pay Later

AI regulations in 2025 are a call to action for executives. The first step is to understand the global landscape and then adopt a practical compliance framework, followed by prioritizing risk mitigation, so that you can position your organization as a leader in responsible AI. You do not have to wait for enforcement. Start proactively by auditing your systems today. If you’re ready to dive deeper, subscribe to The AI Executive for more insights, or reach out for personalized consulting. The future of AI is bright, but only for those who navigate it wisely.

Don't navigate AI risk alone. Our advisory team has guided dozens of organizations through successful AI transformations with proven frameworks that mitigate legal, operational, and reputational risks.

Get a customized AI risk assessment and strategic roadmap from advisors who combine PhD-level AI research, 400+ publications, and real-world CEO experience.

Master AI Risk Management in 8 Weeks

Join C-suite executives in our Executive AI Leadership Mastery Program and learn the frameworks to confidently navigate AI risk, compliance, and governance.

Gain the strategic tools to implement robust AI oversight, ensure regulatory compliance, and lead your organization through AI transformation with confidence.

We hate SPAM. We will never sell your information, for any reason.